Harness the Power of Treasury Analytics

“There were 5 exabytes of information created between the dawn of civilization through 2003,” Eric Schmidt, still the CEO at Google in 2010, once proclaimed, “but that much information is now created every 2 days, and the pace is increasing.”

Today, data has already become a ‘norm’ for almost all businesses. At KyribaLive 2023, Vincent Siccardi, Data Product Director at Kyriba, and Craig Jeffrey, Managing Partner at Strategic Treasurer, led an interesting discussion around the intersection of big data and treasury, the value of AI in treasury operations and treasury analytics, and the opportunities for treasury to truly transform business decision-making,

The Groundhog Day of Data Processing

When it comes to dealing with big data, the process can feel like the movie “Groundhog Day.” Like the movie’s main character, who relives the same day over and over again, treasury professionals often find themselves going through a repetitive cycle of collecting, organizing, and analyzing data.

However, with the proper mindset and tools, this process can become a journey of self-discovery and continuous learning. Those who fail to do so can get stuck in a never-ending loop of inefficiency.

A way out of such “Groundhog Day” is to establish the self-discovery process. It refers to the ability of business users, analysts, or decision-makers to independently explore and analyze data, without necessarily relying on IT or data science teams. It's a way for users to generate their own insights, make data-driven decisions and continuously learn from the data they work with.

“If I have more data, I can quickly do self-discovery,” Jeffrey said. Using data visualization tools, treasury analytics software and other technologies, today’s treasurers can conduct ad hoc queries, create their own reports, and drill down into data as needed, without having to wait for a data professional to do it for them. As Jeffrey emphasized, the end point is not a single conclusion or insight, but rather an ongoing process of learning and discovering new insights from the data.

Cash flow predictive analytics can be a great use case for the self-discovery process, because the data inflow and outflow with cash activities in any organization is so dynamic. By analyzing historical cash flow data and incorporating external factors such as market trends, economic indicators and customer behavior, treasurers can gain insights into future cash flow patterns and assistant management teams to make more informed and timely decisions.

The Shift to a Data Mindset

To truly harness the transformative power of big data in treasury, it is important to cultivate a data mindset. But what does having a data mindset entail?

At its core, a data mindset considers data as the bedrock of any system. Organizations must recognize the pivotal role of data and invest in technologies that enable system scalability and adaptability. As Jeffrey emphasized, “data is the foundation of any system.”

One such technology that amplifies treasury analytics capabilities is in-memory computing. This technology can empower treasury professionals to process colossal datasets in real time and convert them into actionable insights instantaneously. This is a big step forward from traditional data extraction methods, which were often laborious and time-consuming, because in-memory computing stores data directly within the computer's memory, negating the need for time-consuming data movements or pre-aggregations.

In combination with cash flow predictive analytics, in-memory computing utilizes the velocity and power of computer memory for data processing. It also alleviates disk-based I/O bottlenecks, allowing treasurers to perform complex calculations and queries on large datasets rapidly. This efficiency paves the way for the quick discovery of patterns, trends and anomalies.

In-memory computing also liberates treasurers from pre-defined reports or rigid data models. Instead, they can perform on-demand analysis, explore data spontaneously and drill down into any specific details to uncover precious insights that may have remained hidden via traditional analysis methods.

Recent years have seen not only an increase in data volume, but also a remarkable enhancement in data quality. As Jeffrey acknowledged, "data used to be terrible," lacking synchronization, association, structure, and even being inaccessible in some instances. Advancements in data management have addressed these issues, enabling the extraction of meaningful insights from data.

Structural Data and Enriched Analysis

Structural data plays a vital role in making sense of the vast amounts of data in treasury operations. Ignoring the structural framework could mean missing vital insights. “Structural data puts a skeleton in place so you can see the relationship between entities,” Jeffrey said. “[It] helps you make sense of things,” he later added.

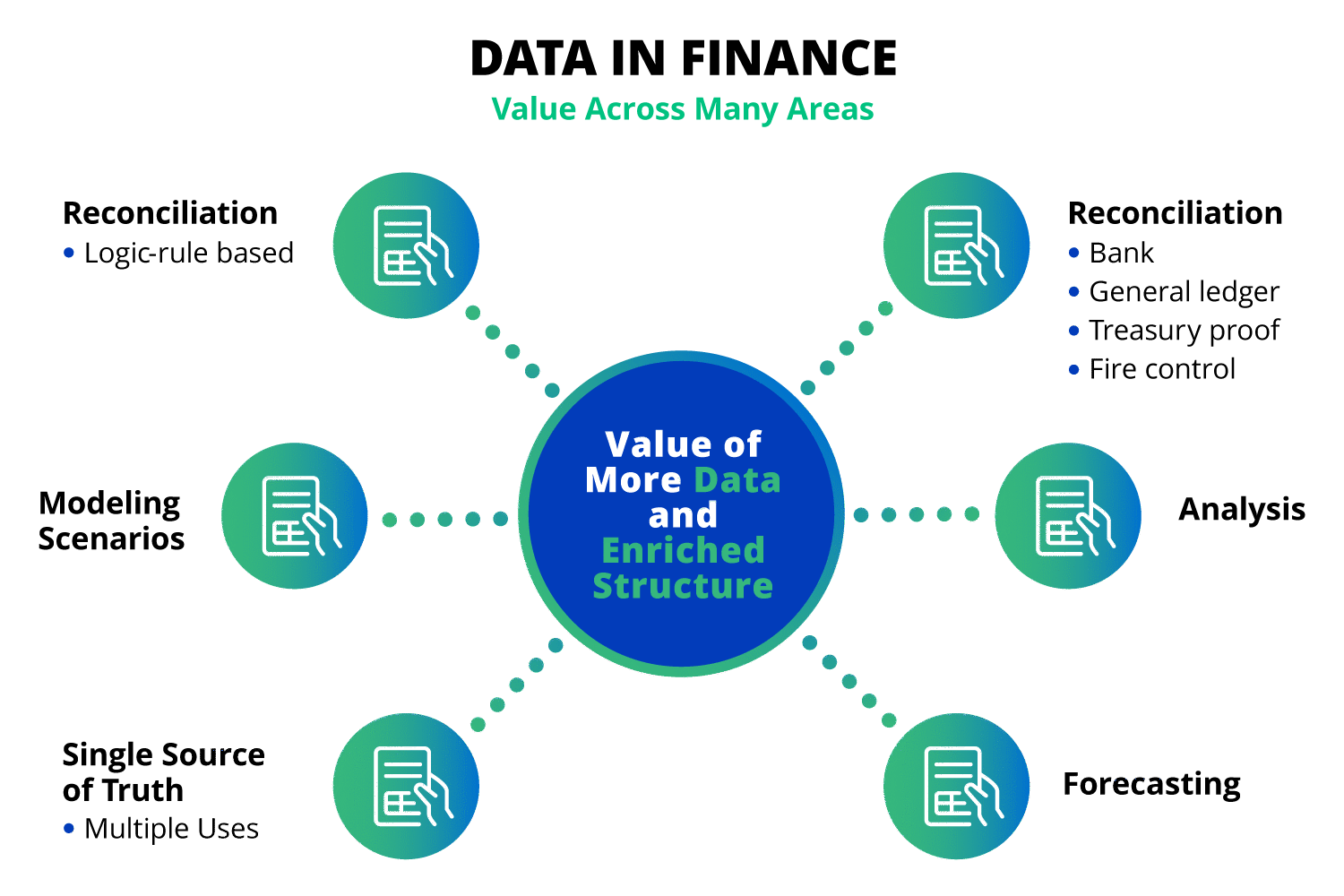

Establishing relationships and hierarchies between entities and associating them with relevant attributes allows treasury and finance professionals to gain a deeper understanding of their financial landscape. In addition, the enriched analysis also contributes to improved risk management, reconciliation, forecasting and supporting accounting rules.

Payments formats serve as excellent illustrations of how structural data can elevate analysis. Consider older formats, like BAI and MT940, which often fall short in terms of structure and expandability. These formats only allow a limited amount of information to be attached to payment requests. XML, on the contrary, offers a structured format packed with essential data descriptors, facilitating more accurate interpretation and analysis. It also makes it simple to incorporate additional data, be it new payment channels or different transaction types, facilitating a more comprehensive understanding of the overall payments information.

The Challenge of Data Scalability and Security

The potential of that data is undeniable, but the challenges it brings in terms of security and access control are just as real. “Everyone can access it,” warned Jeffrey. Failure to protect the data can lead to breaches and non-compliance with regulatory standards. Therefore, safeguarding data is imperative, and businesses need to have the power and scale to do so.

In terms of technology, “we’ve reached the zenith,” Jeffrey said, highlighting the importance of being attentive to partnering and buying technology that meets the needs of the organization today, as well as the in the future. For example, data lakes, as scalable storage repositories, offer a comprehensive solution for managing and securing vast amounts of data, ensuring it can be accessed and analyzed while maintaining the necessary security requirements.

Data Exploration with BI and NLP

The recent enhancements in BI tools, notably with artificial intelligence (AI) and machine learning (ML), have equipped users with predictive analytics, trend forecasting, and anomaly detection. By processing vast amounts of data and identifying complex patterns, AI and ML can provide data-driven predictions and actionable insights, helping treasury professionals make informed decisions more efficiently.

Moreover, the inclusion of natural language processing (NLP) capabilities in BI tools has made data exploration more user-friendly and accessible. NLP is an AI technique that allows computers to understand, interpret, and respond to human language, transforming the way treasury professionals interact with data. This means users can now query data using simple language, similar to a search engine, thereby simplifying complex data manipulations.

The creation of intuitive dashboards and visualizations enable self-serve analytics and reporting, allowing treasury professionals to explore data, identify trends and generate valuable insights independently without the need for data engineers. This allows for real-time tracking of key performance indicators and metrics, enabling treasury professionals to respond swiftly to changing trends, transforming the way professionals analyze, interact with, and derive value from data.

Conclusion

Today, data is no longer just a byproduct of operations; it is the foundation for driving innovation and gaining a competitive edge in the ever-evolving business landscape. Big data has the potential to revolutionize treasury operations, as it empowers treasury and finance teams to unlock insights and improve decision-making. Organizations can harness the full potential of big data in treasury if they embrace a data mindset, establish self-discovery processes, adopt structured data formats and embrace AI-powered BI tools.

Watch this on-demand session to learn more about big data, treasury analytics and AI in treasury.

Related resources